You can train neural networks to generate images. This is done by combining two networks – one learns to recognize images, while the other learns to generate images that can fool it. The networks are adversaries and the technique is called Deep Convolutional Generative Adversarial Networks (DCGAN).

This is part of the project Principal Components where we are investigating the use of machine learning technologies with the Norwegian National Museum in collaboration with Audun Mathias Øygard. The project is supported by the Norwegian Arts Council.

Given that national romantic landscapes figure prominently in the collection of the National Museum it was tempting to train a network generate new samples.

This new app from OMA and Bengler wants to disrupt the sharing economy

archpaper.com Friday, 16. September 2016OMA and Bengler propose digital platform to disrupt the sharing economy

www.dezeen.com Friday, 16. September 2016Bengler is proud to have been awarded Jacob’s prize, the highest recognition from The Foundation for Design and Architecture in Norway. Its history runs back to 1957 and was initially tied to artistic crafts and the Scandinavian design movement. It has since been awarded within a range of design disciplines; interior, landscape, graphic, product as well as architecture.

Bengler is the first recipient to specialise in design for digital media and we are especially pleased to receive this award as we believe craft and material considerations are essential qualities when designing digital services, products and experiences. The lineage of the prize is fitting and we are honoured to be included in this list of esteemed peers.

By “made” we mean Simen provided the concept and foundation that composer Rolf Wallin and british playwright Mark Ravenhill transformed into the opera Elysium expertly realized by musical director Baldur Brönniman, director David Poutney, set designer Leslie Travers and the awesome soloists, chorus and production team at The Norwegian National Opera.

It just finished its initial run in Oslo and was branded “a triumph” by Shirley Apthorp of the Financial Times, and “built on a strong concept” and “a living, well considered work” by Hild Borchgrevink of Dagsavisen.

Rolf Wallin and Simen Svale Skogsrud at the Opera. Photo: Jimmy Linus/D2

In the opera, the bulk of humanity has evolved themselves into immortal trans-human beings with a super-human empathy and communication abilities. A small group of holdouts are preserved on an island sanctuary as a memento of the transhuman origins. The opera explores the drama of profound change and poses the question: Is it more important to preserve or evolve humanity?

Elysium will become available for streaming via The Opera Platform – date unknown.

Stage photos: Erik Berg / DNO&B

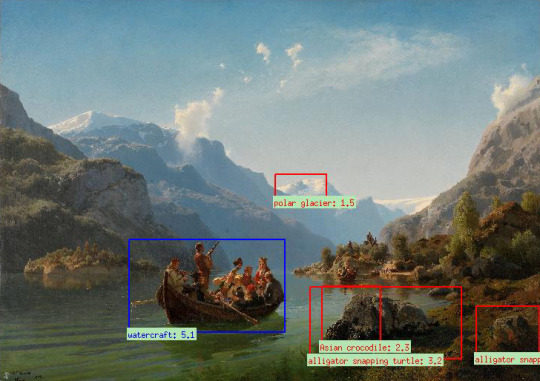

We’re starting a new project with the Norwegian National Museum looking at how machine learning and deep neural networks can be applied to their collections. Both to easily add metadata, but also just to explore the context free gaze of machine classification. A cognitive counterpart to scary robots, machine vision in its current state sits uncomfortably in the uncanny valley of human facsimile

Misclassifications 1: Edward Munch - Self-Portrait with Burning Cigarette and Hippo Snout

Misclassifications 2: Adolph Tidemand & Hans Gude - Bridal Procession on the Hardangerfjord amongst Alligator Snapping Turtles

At the moment we’re doing some initial tests. A model has been retrained to classify periods in art history on Wikimedia arts. The retrained model has in turn been used on the collection of the museum to lay out maps of paintings based on their perceived similarity. Preliminary data is promising and could already be used to build novel user interfaces to aid in comprehension and exploration of collections.

A scattering of t-distributed stochastic neighborhood embedded national romantic landscapes

On this project we are working alongside Audun Mathias Øygard. Audun gained a degree from the Oslo Academy of arts before studying mathematics at UiO. He recently finished his graduate work on image classification using convolutional neural networks.

This project follows up on a project with the musem from two years ago, Repcol, an exploratory data visualization investigating representativity in the collections.

Principal Components is funded by the National Arts Council and the Norwegian National Museum.

So this Fall. Where to begin. We built a omnivorously sensing website for the OMA while refining the metadata on all their projects as well as updating our in-house content curation framework Sanity.

Front page OMA.EU at launch

Right after summer, Loop and their pelvic floor exerciser disrupted their our way out of our bleeding edge co-working decelerator after being acquired by the mammoth Canadian sex toy manufacturer Standard Innovation.

PK was away on possibly blissful paternal leave. We built a topical statistics portal on integration and immigration as an experiment in working for the government.

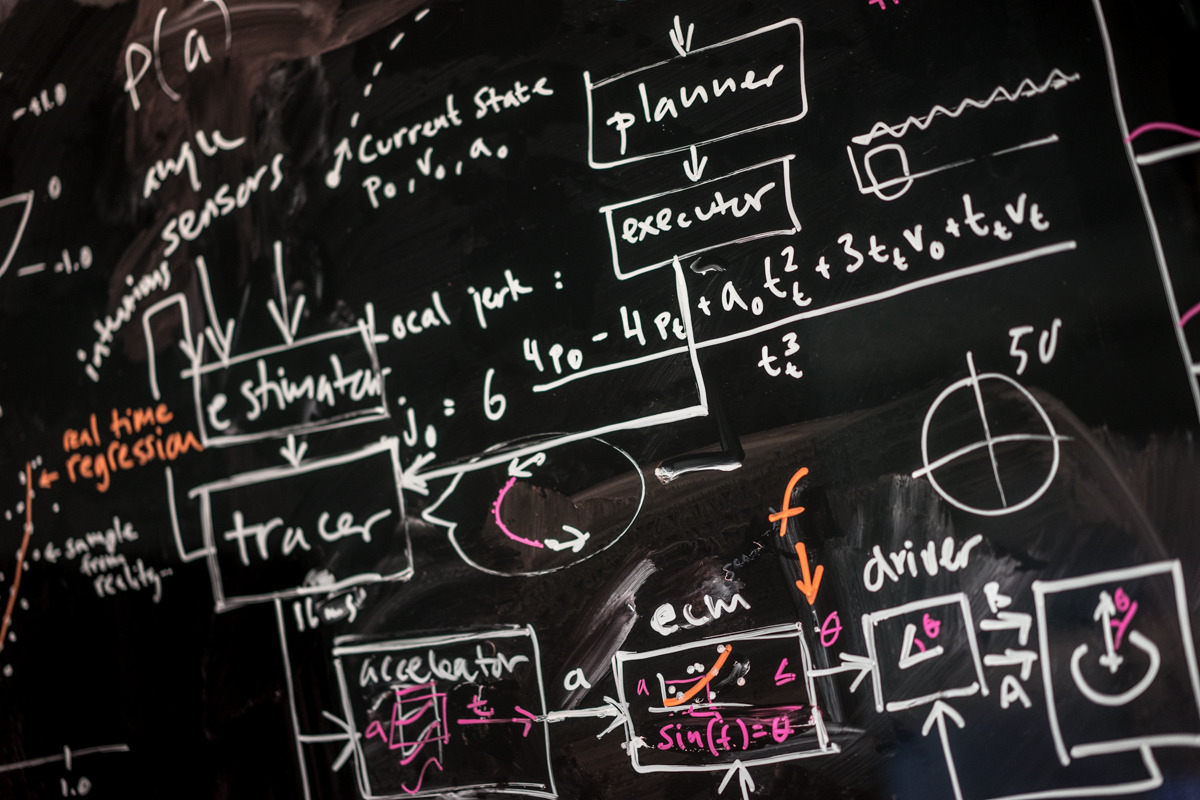

Simen redid the control software for next iteration of the Alta for closed loop control while Even was on a weeklong visit to The ACT at MIT – an historic early collider of art and technology.

ACT sitting comfortably inside the Wiesner Building

Simen’s Blackboard

We’re involved in 3 different research projects funded by the National Research and Arts Councils with HIOA, SINTEF and The Norwegian National Museum. This in addition to a steady stream of work for long term clients.

With design specification work on Vega in full swing and its year long production run beginning January 2016 next year is already looking pretty interesting. So we have been informally talking to people and luckily, Human Resources has been in touch with updates.

In January we will proudly be welcoming Jakob and Espen.

Espen Hovlandsdal is a passionate builder of web softwares, as attested by his many open source projects. He comes to us from Norway’s largest newspaper VG.

Espen Hovlandsdal

Jakob Bak has spent the last four years at (CIID)’s research unit developing projects within the field of interaction design and education. He is a member of the Copenhagen based art and technology collective Science Friction and an avid DIY synthesizer enthusiast.

Jakob Bak

Can’t wait to have you both here!

Vega – our upcoming, Mellon funded, academic publishing system has a bright new website: vegapublish.com

Magic disco ball tells you everything you need to know about climate change

grist.org Saturday, 13. December 2014Global Weirding shows you what the future of Earth might look like (if we let it)

www.treehugger.com Thursday, 11. December 2014Unless you have Lego building kids, Legowish.com has probably passed under your radar. This is an official Lego website where kids can add products to a wish list, watch videos and trigger assorted animations by clicking around and exploring the site. Every played video and animation will earn points, and the more points harvested, the greater the chance to win in a product lottery. There’s both the short term payoff of seeing your points increase, and there’s the carrot - a shiny toy dangling somewhere in the distance.

Seen in action, this comes down to kids getting addicted to the collection of points. The currency kids spend to acquire these points is two-fold: time and exposure to propaganda.

Having spent countless hours myself building Lego as a kid, I have a warm fuzzy place in my heart for this particular toy brand. Thus, my guard was down and it took my a while to acknowledge that legowish.com really is just a disagreeable corporate brainwash engine.

So, the kids and I built an engine of our own to beat Lego at this lame game of clicking and logo worship. Our natural weapon of choice: Lego.

Behold! A mouse clicking robot!

I believe we succeeded in several ways. The three year old appreciated the geared machine which makes Yoda move and the on-screen animation play continuously. The six year old got the dawning realisation that his engagement could be reduced to that of a mindless robot. For my own part, I was once again reminded that when forced to play somebody else’s stupid game, always try to break free and rewrite the rules.

I guess we owe Lego our thanks, their sinister site made us build something cool :-)

Make Magazine took note of our 3d-printer at Maker Faire Oslo: “After a while all 3D printers start to the look the same, but there are a few that are different. I talked to Simen Svale Skogsrud—from Polarworks here in Norway—about their upcoming Alta printer.”

http://makezine.com/2014/08/30/a-new-type-of-3d-printer-at-maker-faire-trondheim/

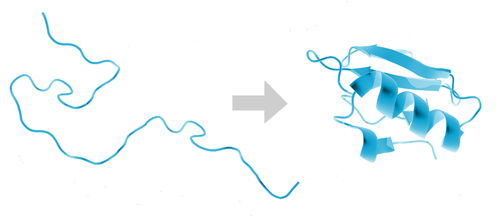

Our non-critical server systems are now running protein folding simulations in their spare time, as part of Stanford University’s Folding@home project. Understanding how proteins fold has important applications in medicine and molecular biology.

The project’s distributed computing cluster is currently one of the world’s most powerful at 21 petaFLOPS - an order of magnitude more than the world’s fastest supercomputer - and the results have contributed to over 100 scientific papers.

We have currently allocated 86 CPU cores across 5 nodes to the project, and may extend this to include underutilized production systems if it proves stable enough. You can follow our progress on our team page.

Our widely adopted open source CNC controller GRBL used to be licensed under the LGPL which is a strict license somtimes dismissed as being “viral” as it requires all software that build on GRBL to also be open source.

Lately we have been approached by a number of actors wanting to use GRBL in projects that for business reasons are hard to fully open source. To allow even wider adoption, the contributors have opted to re-licence all of it under the permissive MIT-license which essentially allows the repurposing of GRBL for any use whatsoever.

The only thing we kindly ask in return is that due credit is given.

A special thanks to current lead developer Sonny Jeon for his impeccable stewardship.

Thomas rushed Printing Things, Visions and Essentials for 3D Printing from Die Gestalten Verlag back from Thingscon 2014 in advance of our contributor’s copy. It provides a thorough overview of the field and it feels great having Terrafab included.

tl;dr We’re building an extravagantly simple and efficient 3d printer. Learn about our progress by signing up on Polarworks.

Four years ago Simen sat down and wrote GRBL – a motion control software for machines that make things. It worked for us – it ran a little CNC mill we used to have in our office – and has also worked for hundreds of other DIY projects that shape by cutting with metal, burning with lasers or laying down minute quantities of molten plastic.

After completing the project Simen tried to think of simpler ways of building 3d printers. He came upon the idea of using two rotational axis instead of a gantries for the X and Y axis. This cuts part count radically and makes the printer nearly silent. No linear bearings, no timing belts, no gears. Just a few slabs of solid metal and precision motors. It also looks excellent when the printer draws a completely straight line by twisting around.

We built a prototype. Printed one part. Took 3 pictures of it and promptly went off to think about other things.

Our first and only part

It did bother me though. That we had the world’s simplest 3d printer standing in our office, gathering dust.

Last Spring when teaching at the school of design and architecture I came across two industrial design students, Hans Jakob Føsker and Alexandre Chappel, who were well aware of GRBL as they had built their own Rostock style 3d printing deltabots in a windowless room in the school’s basement.

Together we decided to blow the dust off our old prototype and design a printer for a production run. We also had the good fortune to run into Thomas Boe-Wiegaard, a mechanical engineer with an aerospace background, who has joined the project and really helped us sharpen our drawings and specifications.

We’ve been working on it for a few months. We have a brilliant design we are totally in love with. A test batch of precision aluminum parts from Italy. Schematics for a PCB. Tables covered with stepper motors, scopes and accelerometers. We’re writing firmware for ARM! Something that we’ve wanted to explore for a long time. It’s been great fun and we can’t wait to start making the movies and websites to show you exactly what our little machine can do and the design goals we have.

If you want to know how we’re doing you can sign up for our newsletter on polarworks.no.

Thanks here must also go to Einar Sneve Martinussen and Nicholas Stevens who supervised the process at AHO and provided valuable input along the way.

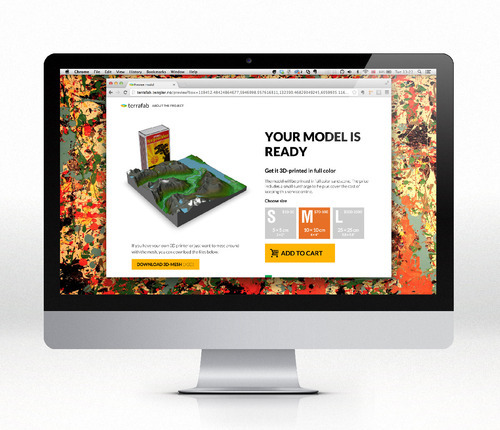

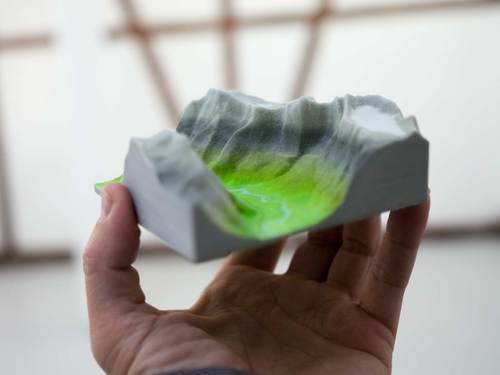

We have gotten a lot of a feature requests on Terrafab since its release a month ago. The most common wish has been for bigger models so in addition to the current 10cm × 10cm we’ve added a whopping 25cm × 25cm option. If you just want a tiny trinket we’ve also added a tiny 5cm × 5cm version.

As Shapeways charges by the cubic cm the small model is between $10 and $50. The large model though starts at $500 and costs all the way up to $2500 if you go really mountainous.

Own a slice of Norwegian fjord thanks to Terrafab and 3D printing (Wired UK)

www.wired.co.uk Tuesday, 22. October 2013

GRBL now has a new Arduino shield! This one is made by Bertus Kruger of Protoneer. He’s cooking up a batch and you’ll be able to order them on his site.

Instagram-user eivind1983 says: How cool is a mini-version of a mountain? Incredibly cool! I have allready discovered a place I never noticed before, and that I will visit asap. Hooray!

In the haze following the release of Mapfest we’ve picked up Repcol again to work on for its 10. of October deadline. Repcol aims to pack together the available metadata about the collections of the National Museum into one dynamic illustration.

Before the Mapfest crunch set in we completed the wrangling so it was more or less ready to just start assembling into visuals. We lost almost half of the 60k works due to lack of data coverage. Which is kind of sad, but I think there’s enough still for it to be interesting.

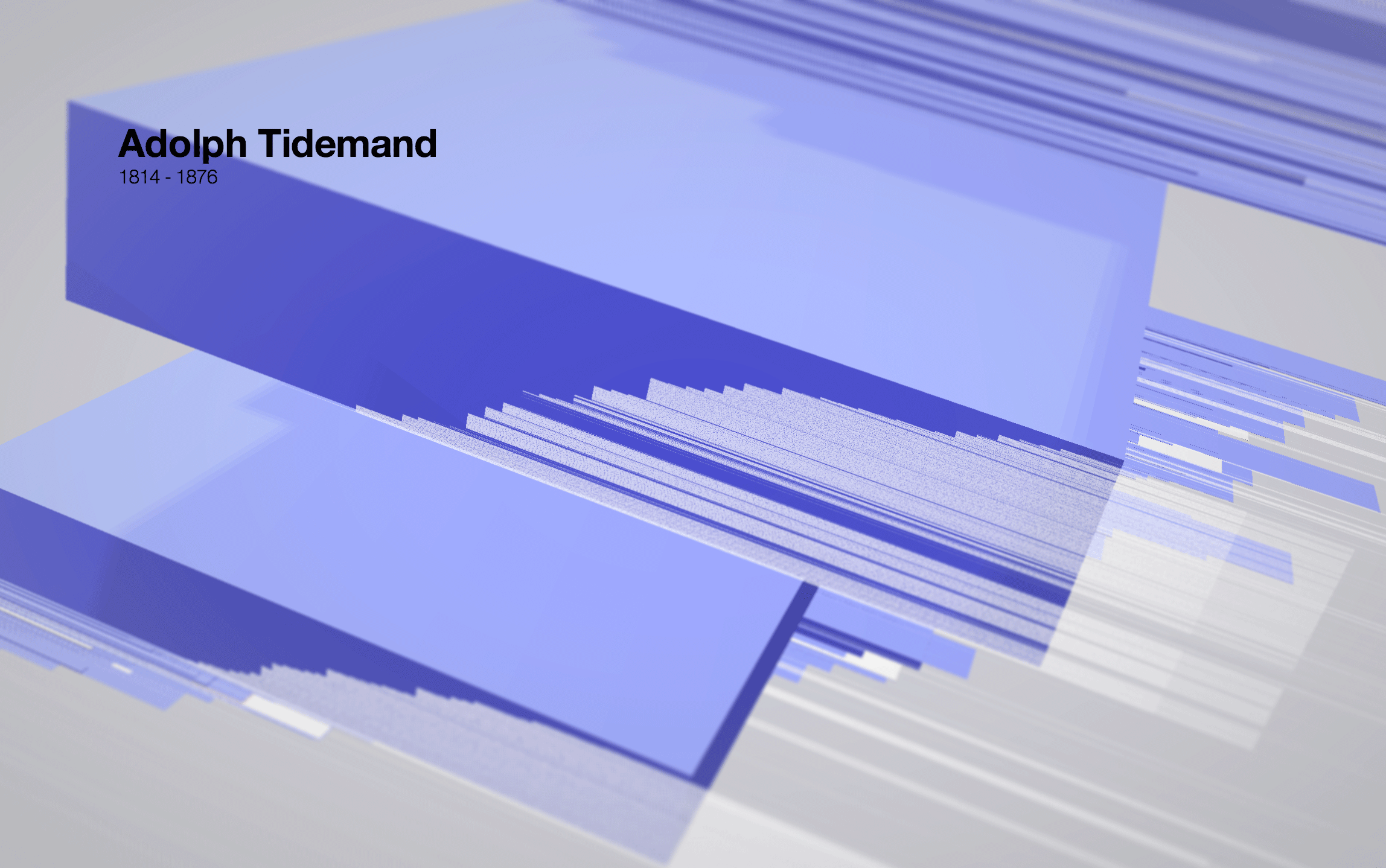

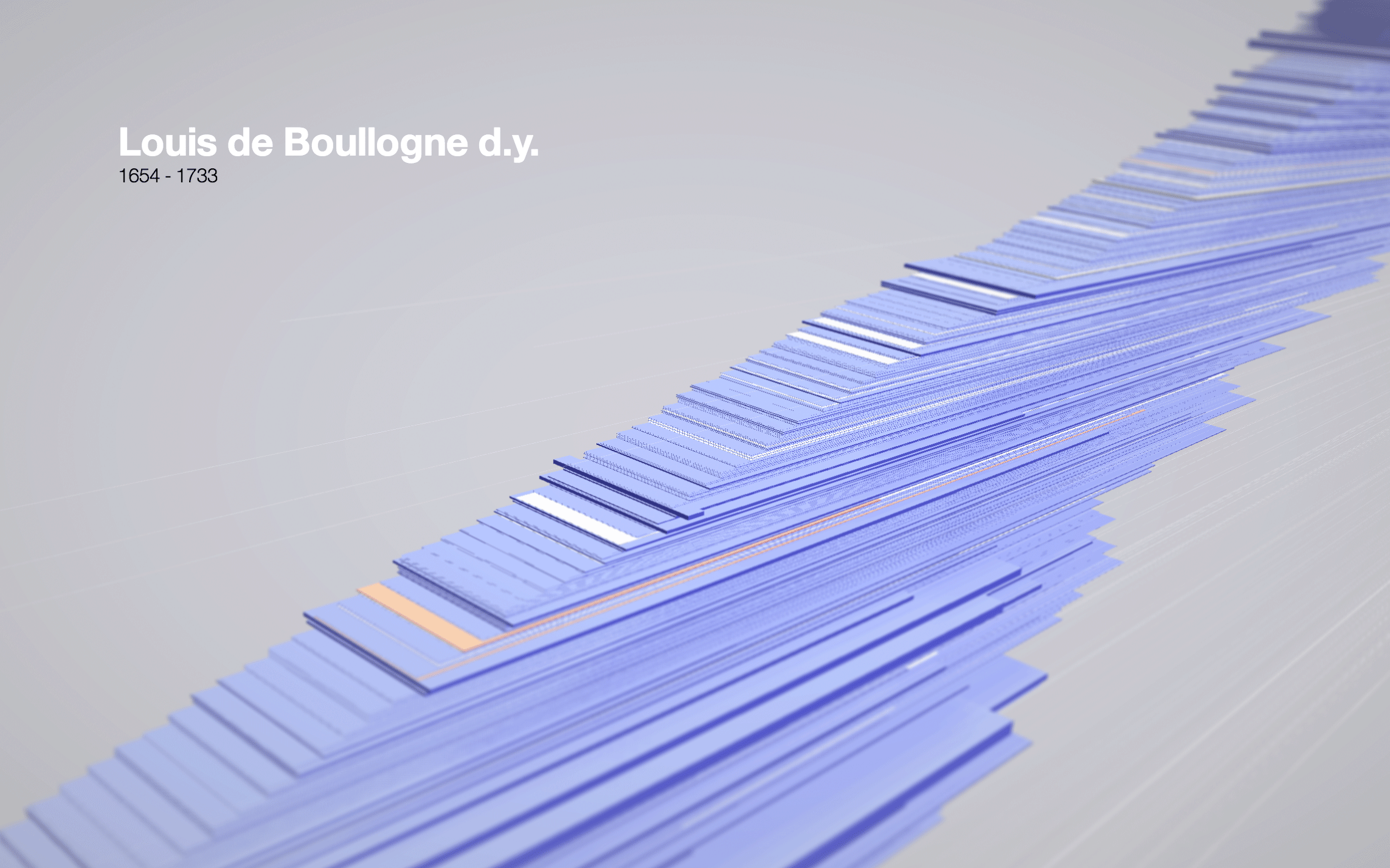

We’ve settled for a simple timeline view, with each artwork being packed upwards on the y-axis. Each artist represented by a tetragonal prism (yes, a box) colored by gender. Each work they produced, a thin line along one face.

And yes, I know – enough with the data sculpture already. I could enumerate the reasons why this is a reprehensible representation in terms of legibility and how aggregation would be so much better suited. Instead I’ll post some pictures here and go back to tweaking my tilt-shift parameteres and revelling in my open brief.

Adolf Tidemand – box shows gender and amount of works collected. Work creation and acquisition shown by white lines. Gaps in lines reveal on y axis show gaps in archive metadata. Works have been acquired in two batches. Perhaps as a gift to the museum

Suprise. Few women figure in the collection strategies that acquired work from the 1600s.

The close-ups of the model are fairly nice to look at. We’re still not completely happy though with the strange wing-like shape the shole thing occupies in space. There is also code to write to make the structure easily navigable and searchable. And not least: actually display the works of the artist chosen.

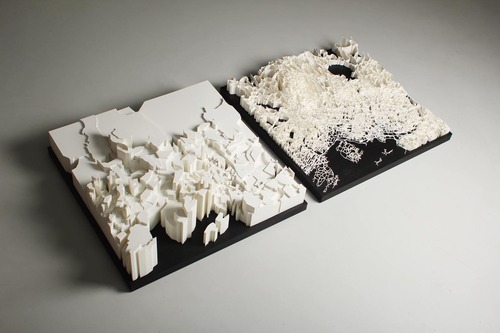

On Friday we launched Mapfest – after spending a days and nights since the beginning og September wrangling data and building software. The project started with the Norwegian Mapping Authority giving us a head start with a large packet of geospatial data they were going to freely license.

To give everyone a flying start with the data we’re packaging it up as ready-to-run VM. We also built a service where anyone can get a custom printed slice of Norway from Shapeways – right from the browser – and laser sintered a two sculptures from the data.

We had great fun with this project and would have posted pictures from the release party on Friday if we hadn’t been too tired and euphoric to remember to actually take any.

We got our Oculus Rift dev-kit last week. DHL delivered it some time this summer. We were all over europe at the time, but PK was able to take the delivery. It appears he spent a good chunk of his vacation inside Half Life 2. “Didn’t we get the Oculus this summer?” He gave it up reluctantly. We had to let him keep it over the weekend before bringing it in. Then we all had a go.

Simen Skogsrud enjoying Virtual Reality

I never got to try any of the high end VR-gear in the early 90s. The closest I ever got to first-wave VR was about fifteen years ago in a CAVE automatic virtual environment at Telenor Expo here in Oslo. CAVEs put you in a physical room surrounded on all sides by projection surfaces. Using active shutter glasses and head tracking it virtually blows out all four walls and potentially even the floor and ceiling. I held a manipulation pen that shot bright green vector pixels precisely from its tip. It took me on a virtual tour in a south african mine. It let me play with an interactive aerodynamics simulation letting me move around like a ghost through the car body. The illusion so vivid it got kind of unclear whether it was my body or the simulation that was immaterial.

Like after a day on a rolling boat, or a newbie afternoon on a snowboard, I stumbled onto the sidewalk with an unsure gait. I don’t generally recall any specifics from the late 90s. But the CAVE is still vibrant.

This is not what VR feels like

Musing enthusiastically on the technological premise in the Lawnmower Man is hard. It is difficult being elated by stuff submerged at the bottom of the trough of disillusionment. So I took to the webs and revisited that time when VR crested the hype cycle. Here: John Perry Barlow writing in Mondo 2k in 1990 – Being in nothingness:

And I don’t seem to have a location exactly. In this pulsating new landscape, I’ve been reduced to a point of view. The whole subject of “me” yawns into a chasm of interesting questions. It’s like Disneyland for epistomologists. “If a virtual tree falls in the computer-generated forest..?” Or “How many cybernauts can dance on the head of a shaded solid?” Gregory Bateson would have loved this. Wittgenstein, phone home.

Despite the current confines of my little office-island, I know that I have become a traveller in a realm which will be ultimately bounded only by human imagination, a world without any of the usual limits of geography, growth, carrying capacity, density or ownership. In this magic theater, there’s no gravity, no Second Law of Thermodynamics, indeed, no laws at all beyond those imposed by computer processing speed…and given the accelerating capacity of that constraint, this universe will probably expand faster than the one I’m used to.

Which brings me to a point which makes Jaron Lanier very uncomfortable. The closest analog to Virtual Reality in my experience is psychedelic, and, in fact, cyberspace is already crawling with delighted acid heads.

The reason Jaron resents the comparison is that it is both inflammatory (now that all drugs are evil) and misleading. The Cyberdelic Experience isn’t like tripping, but it is as challenging to describe to the uninitiated and it does force some of the same questions, most of them having to do with the fixity of reality itself.

This last part is what unsettles me about the Oculus Rift. Once you strap into it and start walking about in one of the tech demos, reality instantly recedes. The vertiginous effect of leaning back, looking down past the awning of a balcony doorway onto the room below.

As an aside, these stories afford a picture of a younger Jaron Lanier, in his 20s. Before he decided the stuff his friends came up with would lead to erm, “Digital Maoism.” – I like the unalloyed positive technological determinism – the promise of a shinier technological epistemology from this article from the NYTimes:

«It’s a new level of reality,» said Mr. Lanier, a 28-year-old programmer who has become a guru of the artificial reality movement. «There’s never been another one except for the physical world, unless you believe in psychic phenomena.»

I like that, the NYTimes writing about an “artifical reality movement” in 1989 – very 90s, like a movement for ahistorical immaterialism – remniscent of the neocons under Bush Jr.:

The aide said that guys like me were “in what we call the reality-based community,” which he defined as people who “believe that solutions emerge from your judicious study of discernible reality.” … “That’s not the way the world really works anymore,” he continued. “We’re an empire now, and when we act, we create our own reality. And while you’re studying that reality—judiciously, as you will—we’ll act again, creating other new realities, which you can study too, and that’s how things will sort out. We’re history’s actors…and you, all of you, will be left to just study what we do.”

But I’m straying from the topic at hand.

New virtual reality simulator teaches Airmen how to fall 3/7/2013

What I really wanted to say was that John Perry Barlow is spot on. When you strap into the Rift to your head, reality does recede. The voices of the people in the room come from far away. They seem bothersome, demonic, at odds with where you actually are. This sense of being dislocated into new spaces holds great promise. It promises great utility, fantastic entertainment, and incredible social ills.

Party preparation – building a 12x12 matrix from rows of super bright LED strips – The LED strips were DMX controlled and came with a UDP bridge that we just stuck on our router. We wrote some Javascript to control it code on github (includes psychedelic patterns and a resampled 12x12 Super Mario walk cycle).

Quick Daft Punk LED Matrix Display w/ Attiny13 Hack

So what do you do when one of your friends has a six year old who really likes building Daft Punk helmets? I had some Attinys, a maxim 7219 LED driver and a generic old 8x8 LED matrix lying in a closet and spent a couple of evenings stringing them together and writing some code – though with kb of memory you find yourself running your code into stack space before getting much work done. Also, a nice tool called The Dot Factory that outputs windows fonts as C arrays saved me at east an hour.

If you want one you can find the source on Github.

We want to make an semi-automated brewery using an Arduino Due and Raspberry PI. The source code for software is available in the Brewtroller project. To make it more open, we want to use I2C relay boards for the motorized ball velves and heating. For temperature, the One Wire is awesome.

For inspiration we have Black Heart Brewery for the automated brewtroller system, and The Electric Brewery for the hardware.

Milk is for babies. When you grow up you have to drink beer.

Arnold Schwarzenegger

A video of a brewing control panel using Brewtroller

Training training training. Consistently 40 wpm now, but wants to reach at least 50 by tomorrow. The goal is 70 and it feels reachable. Most of the speed loss is me mentally verifying a fast sequence more often than not finding that I have performed correctly but unconsciously.

Today’s blank. Red this time. Feels good in the hand, and fits in a jeans pocket alongside the phone. A few tweaks and it is time to transform this into a fully working version.